Who Writes AI Impressions Best? Humans or AI

Researchers find that radiologists prefer custom AI-generated and human-created impressions over generic AI-generated impressions.

Oncologic imaging reports are often long and detailed. Think 40 CTs per day at about 500 words each, you’re at 100,000 words over a five-day workweek. That’s longer than reading “Life of Pi” or “The Hobbit” – yes, seriously! Imagine that every week, for years.

Not only is this time consuming, it may also contribute to the 44-65% burnout rate seen in radiology, which is leading to the early retirement of many radiologists and the slowing entry of new radiologists into the field.

The Research Landscape

Recent AI advancements enable automated generation of AI impressions. While initial evaluations have been promising, the published quality measures have been limited, with no publications to date on complex studies and usefulness of abdominal imagers.

“Radiology reports are cognitively demanding, and the impression requires an additional layer of synthesis and judgement,” said Andrew Del Gaizo, MD, CMIO at Rad AI. “That combination contributes meaningfully to workload and burnout, and is exactly where AI has the potential to help.”

Limited Studies on Effectiveness … Until Now

In 2026, a group of researchers, including Dr. Del Gaizo, set out to evaluate the quality, safety and clinical utility of AI-generated radiology impressions compared to human-authored impressions across multiple clinical stakeholder groups.

They took a retrospective sample of 200 de-identified CT oncologic reports dictated by four abdominal radiologists — 50 per radiologist. Impression types:

- Human-authored: their own

- Custom AI-generated (Rad AI Impressions): proprietary radiology-specific model fine-turned on institutional data

- Generic AI-generated: publicly available large language model (LLM) with a radiology-tailored prompt

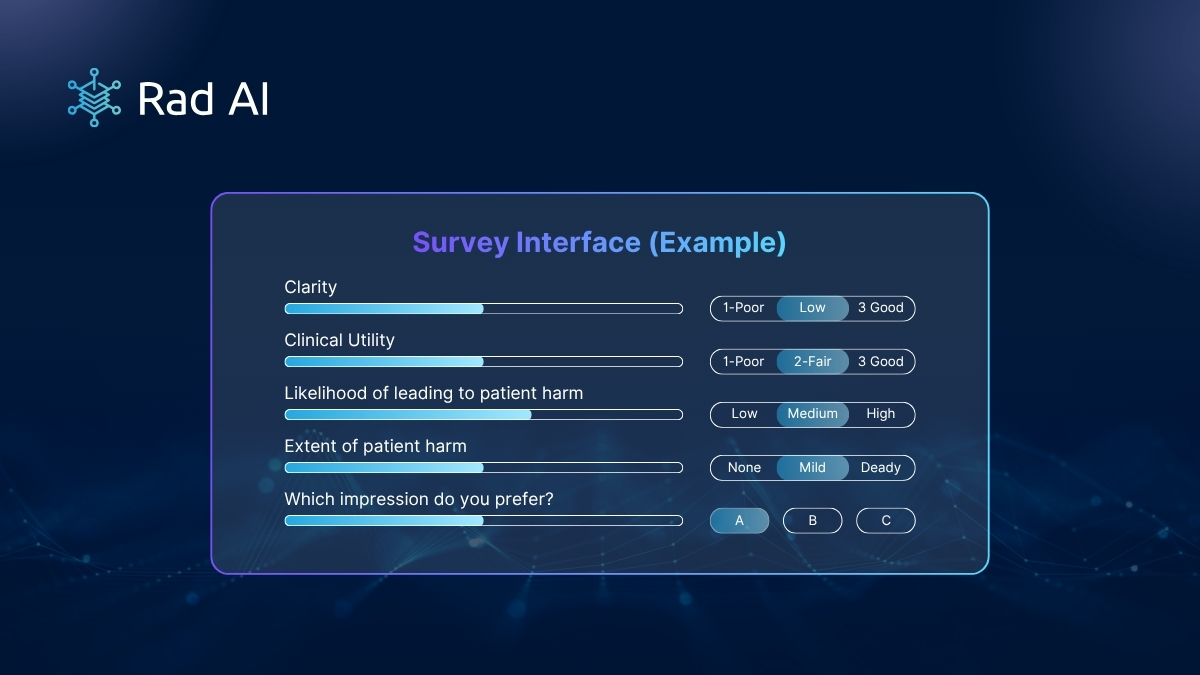

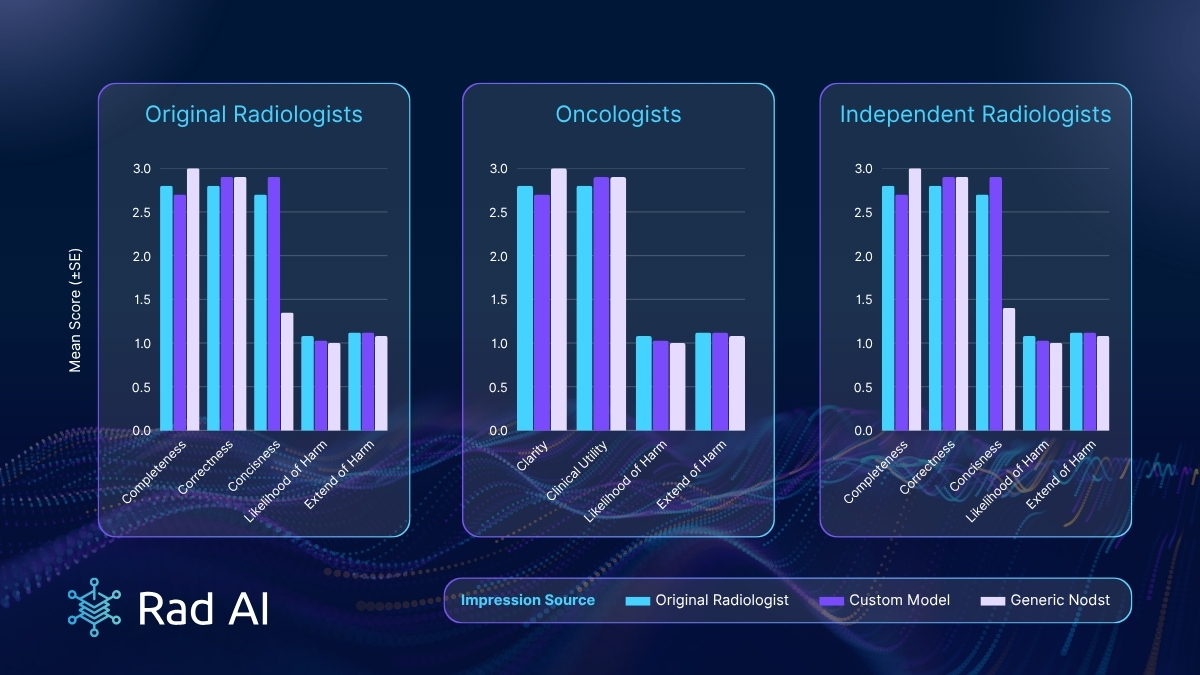

The original radiologist evaluation was blinded to the impression source, and they were rated on completeness, correctness and conciseness. All reviewers were assessed for clarity, clinical utility and likelihood of it leading to patient harm. Quantitative metrics and reliability included word count, item count, BLEU score, generation time and inter-rater reliability (ICC).

“We wanted to move beyond anecdotal impressions and rigorously evaluate how AI-generated impressions perform in real clinical contexts, across the different stakeholders who rely on them,” said Dr. Del Gaizo.

What They Found: Word Count, Impression Items and Generation Time

The study resulted in a variety of findings, including:

- Word count: Generic model impressions were substantially longer than both original and custom model AI impressions, with humans at 41.2 ± 21.4, custom AI model (domain-specific) at 34.2 ± 17.4 and generic AI model at 75.1 ± 20.4.

- Impression items: Generic model impressions contained more impression items, on average. Humans were at 2.9 ± 1.2, custom AI model (domain-specific) at 3.0 ± 1.2 and generic AI model at 6.3 ± 1.6.

- Mean generation time: Custom AI model (domain-specific) mean generation time was significantly faster at 1.9 ± 0.8 seconds compared to the generic AI model at 11.6 ± 3.6 seconds.

What They Found: Radiologist and Oncologist Preferences

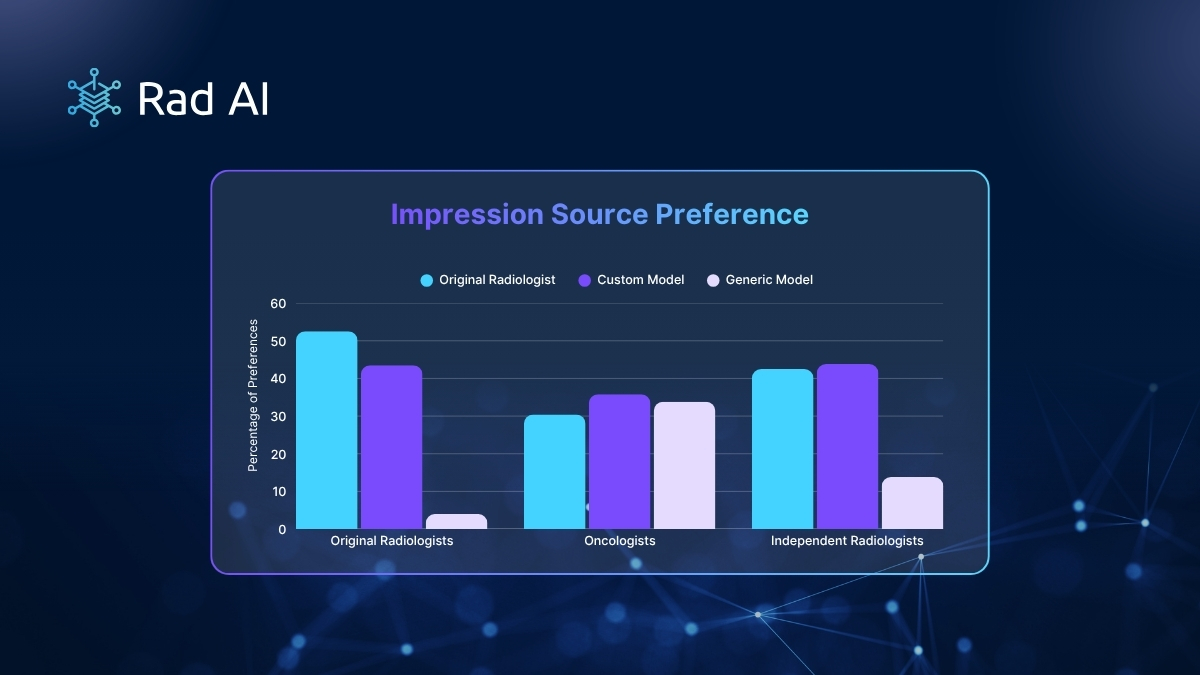

So, what did the radiologists and oncologists think? Radiologists preferred human and custom AI over generic AI (p < 0.001). Oncologists were more evenly split, with a slight preference for custom AI (p=0.21-0.63).

Results Overview

Custom and generic model impressions demonstrated near parity with human-authored impressions across most quality metrics. The exception: Generic models impressions were significantly less concise. Patient harm ratings were uniformly low.

Key author discussion points from the results:

- Domain adaptation and stylistic alignment: Strong preference among radiologists for original and customized AI impressions highlights the importance of domain adaptation and stylistic alignment in clinical text generation.

- Exhaustive enumeration of findings: Generic models demonstrated a tendency toward exhaustive enumeration of findings at the expense of prioritization and signal-to-noise ratio.

- Explanatory context over brevity: Oncologists demonstrated no statistically significant preference, suggesting they sometimes value explanatory context and narrative clarity more than brevity alone.

- Shift in evaluation and deployment: Results support a shift in how AI-generated radiology impressions are evaluated and deployed.

- User-specific preferences: Rather than optimizing models toward a single idealized output, greater emphasis should be placed on adaptability, editability and alignment with user-specific preferences. This perspective aligns with frameworks emphasizing human-AI collaboration rather than automation alone.

Why This Matters Clinically

When it comes to studies in the field, clinical impact is paramount. It’s what matters most to radiologists and, ultimately, impacts patients. Here’s what researchers concluded.

- Workload and quality balance: Automated impression generation may help alleviate abdominal radiologist workload while maintaining quality across stakeholders.

- Real-world readiness and customization: Findings highlight readiness for complex oncologic exams, though preference differences underscore the need for local customization.

“AI can help draft high-quality impressions, but the real opportunity is in the customization,” said Dr. Del Gaizo. “With humans in the loop, these systems can adapt to how different clinicians think, communicate and make decisions.”

See the full study for more details.

Interested in bringing AI to your radiology department or practice?

Learn about Rad AI Impressions.

Citation

Phadke, S., Suresh, N., Allen, Z., Balagopal, A., Chan, S., Shah, A., Winter, M., Lam, C., Rose, T., Araujo, C., Ahmed, A., Imanirad, I., Berland, L., & Del Gaizo, Al. (2026). Comparison of AI generated radiology impressions: a multi-stakeholder evaluation. npj Digital Medicine. https://www.nature.com/articles/s41746-026-02586-6